Research leads to mental health innovation, takes top award at international competition

- June 28, 2023

- By Jill Young Miller

- 3 minute read

WashU Olin Professor Salih Tutun and his team took the Gold Award (first place) in the May 2023 IISE Cup Competition. Their research led to an innovation that can quickly identify common mental disorders, such as depression and anxiety.

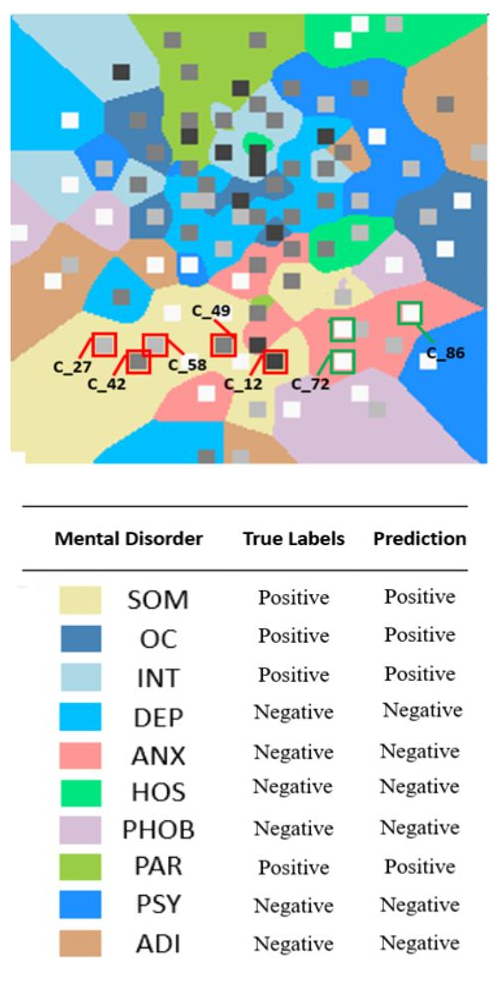

The team designed a way to map with color imaging 10 common mental disorders. Mental health professionals can use the product to screen patients and monitor the patients’ progress during treatment, and it’s already in use at a clinic in Turkey.

“Mental health experts need innovative tools to efficiently screen a large volume of potential patients for mental health disorders,” said Tutun.

The world is facing an unprecedented mental health crisis. An estimated 970 million people, nearly one in eight people globally, lived with mental disorders in 2019, according to the World Health Organization. The crisis has only gotten worse since the COVID-19 pandemic. In the United States, one in five adults experience mental disorders today, National Alliance on Mental Illness reports.

According to a Lancet Commission report, the overall cost of mental health problems between 2016 and 2030 would surpass $16 trillion globally due to lost productivity, disability, social welfare spending for homelessness and poverty, and law-and-order spending for public safety.

“The demand for mental health services is exploding around the globe,” Tutun said. The need is growing for automated tools to support screening, diagnosis and monitoring, he said.

MDscan

The working paper about the research is titled “MDscan: An explainable AI artifact for screening and monitoring mental disorders.”

Tutun and his team presented their findings May 21 at the IISE competition in New Orleans. The Institute of Industrial and Systems Engineers runs the annual international competition to showcase solutions to business or social problems. Tutun’s team beat out competition from Amazon, Airbus, IBM, DHL and others.

The study used computational design science to develop the explainable artificial intelligence (XAI) artifact—named MDScan—for screening, diagnosing and monitoring disorders. Explainable artificial intelligence is a set of processes and methods that allows human users to comprehend and trust the results and output that machine learning algorithms create.

MDscan uses patient responses to a clinical neuropsychological test to create full-color images, similar to radiological images. It predicts which disorder or combination of disorders is afflicting a patient and the severity. It also explains the underlying logic of the prediction.

The researchers evaluated MDscan’s performance by using patient data collected from 200 mental health clinics. MDscan outperformed current clinical practice with an average of 20% improvement, according to the research.

The researchers evaluated MDscan’s performance by using patient data collected from 200 mental health clinics. MDscan outperformed current clinical practice with an average of 20% improvement, according to the research.

MDscan helps doctors manage their patient load, Tutun said, by delivering a preliminary diagnosis and classifying patients by mental condition and severity.

Shortage of mental health providers

While the demand for mental health services grows, many places suffer from a shortage of providers. Some countries report as little as one psychiatrist for every 100,000 people. The result: long wait times for appointments, delayed diagnosis and treatment, and continued suffering. Psychiatrists and counselors have called for innovative tools to boost their capacity to screen and treat patients more efficiently and effectively, Tutun said.

But clinics haven’t broadly accepted other AI tools, he said, because of their “black box” models, which lack transparency. “Without explanations, experts cannot trust these predictions for clinical use, where patients’ lives and well-being are often at stake,” Tutun said.

MDscan is a “white box” model that elaborates on what patient answers contributed to the predicted diagnosis and to what extent.

Tutun is an expert in decision analysis, deep learning, design science and explainable AI for understanding how human behaviors and social interactions affect critical decisions in business.

In addition to Tutun, team members were Anol Bhattacherjee of the University of South Florida, Kazim Topuz of the University of Tulsa, Ali Tosyali of the Rochester Institute of Technology, and Gorden Li of Bosch Center for Artificial Intelligence.

“The MDscan artifact presented in this paper attempts to address this critical problem while also demonstrating how to improve explainability, user trust, confidence, and acceptance of AI predictions by explaining their AI predictions,” the authors wrote.

“We hope that our research will inspire other researchers to develop their own XAI approaches for addressing critical business and social problems in other domains, such as finance and cybersecurity, where visual and explainable representations of large complex data sets are needed to detect essential and useful patterns.”

Media inquiries

For assistance with media inquiries and to find faculty experts, please contact Washington University Marketing & Communications.

Monday–Friday, 8:30 to 5 p.m.

Sara Savat

Senior News Director, Business and Social Sciences